Jan 19 2018

Researchers from the National University of Singapore (NUS) have created a novel microchip, named EQSCALE, capable of capturing visual details from video frames at very low power consumption. The video feature extractor consumes 20 times less power than present best-in-class chips, and hence needs 20 times smaller battery, and could bring down the size of smart vision systems to the millimeter range. For instance, it can be powered uninterruptedly by a millimeter-sized solar cell without requiring battery replacement.

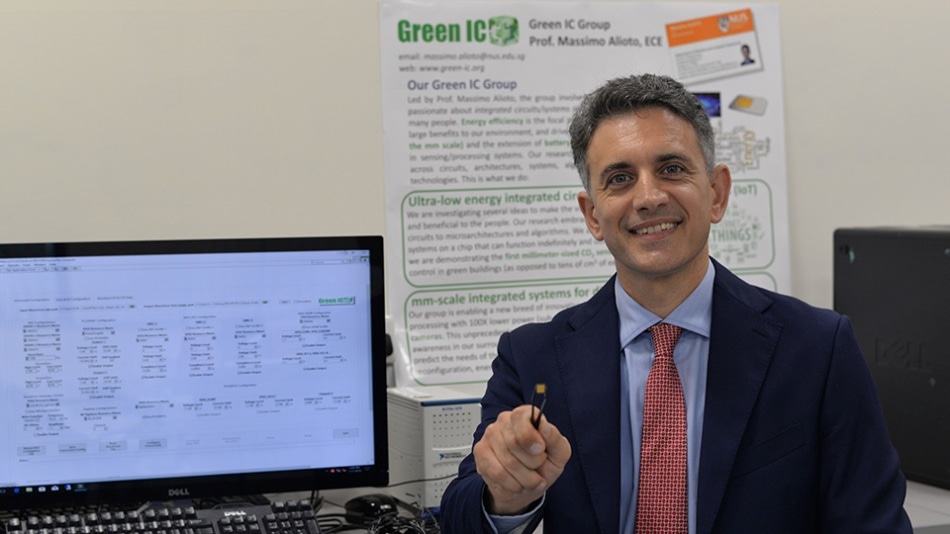

Associate Professor Massimo Alioto and his team from NUS Engineering developed a tiny vision processing chip, EQSCALE, which uses 20 times less power than existing technology. (Image credit: National University of Singapore)

Associate Professor Massimo Alioto and his team from NUS Engineering developed a tiny vision processing chip, EQSCALE, which uses 20 times less power than existing technology. (Image credit: National University of Singapore)

Headed by Associate Professor Massimo Alioto from the Department of Electrical and Computer Engineering at the NUS Faculty of Engineering, the team’s discovery is a key step forward in creating millimeter-sized smart cameras with near-perpetual lifespan. It will also open doors for cost-effective Internet of Things (IoT) applications, such as ubiquitous safety surveillance in airports and key infrastructure, workplace safety, building energy management, and elderly care.

IoT is a fast-growing technology wave that uses massively distributed sensors to make our environment smarter and human-centric. Vision electronic systems with long lifetime are currently not feasible for IoT applications due to their high power consumption and large size. Our team has addressed these challenges through our tiny EQSCALE chip and we have shown that ubiquitous and always-on smart cameras are viable. We hope that this new capability will accelerate the ambitious endeavour of embedding the sense of sight in the IoT, as well as the realization of the Smart Nation vision in Singapore

Associate Professor Massimo Alioto

Tiny vision processing chip that works non-stop

A video feature extractor captures visual details shot by a smart camera and converts them into a lot smaller set of points of interest and edges for extra analysis. Video feature extraction is the heart of any computer vision system that automatically detects, classifies, and tracks objects in the visual scene. It has to be performed on every single frame continuously, therefore establishing the minimum power of a smart vision system and hence the minimum system size.

The power consumption of preceding high-tech chips for feature extraction ranges from several milliwatts to hundreds of milliwatts, which is the average power consumption of a smartwatch and a smartphone, respectively. To facilitate near-perpetual operation, devices can be powered by solar cells that produce energy from natural lighting in living spaces. However, such devices would need solar cells with a size in the centimeter scale or larger, therefore posing a major limit to the miniaturization of such vision systems. Shrinking them down to the millimeter scale requires the decrease of the power consumption to much lesser than one milliwatt.

The NUS Engineering team’s microchip, EQSCALE, can perform nonstop feature extraction at 0.2 milliwatts – 20 times lower in power consumption than any current technology. This translates into a key progress in the level of miniaturization for smart vision systems. The novel feature extractor is smaller than a millimeter on each side, and can be powered uninterruptedly by a solar cell that measures just a few millimeters in size.

This technological breakthrough is achieved through the concept of energy-quality scaling, where the trade-off between energy consumption and quality in the extraction of features is adjusted. This mimics the dynamic change in the level of attention with which humans observe the visual scene, processing it with different levels of detail and quality depending on the task at hand. Energy-quality scaling allows correct object recognition even when a substantial number of points of interests are missed due to the degraded quality of the target.

Associate Professor Massimo Alioto

Next steps

The development of EQSCALE is an important step towards the future demonstration of millimeter-sized vision systems that could function indefinitely. The NUS research team is seeking to create a miniaturized computer vision system that comprises smart cameras fitted with vision capabilities enabled by the microchip, as well as a machine learning engine that understands the visual scene. The final goal of the researchers is to enable vastly distributed vision systems for wide-area and ubiquitous visual monitoring, massively surpassing the traditional concept of cameras.