Infographic for System Design for Pose Determination of Spacecraft Using Time-of-Flight Sensors. Image Credit: Space: Science & Technology.

Infographic for System Design for Pose Determination of Spacecraft Using Time-of-Flight Sensors. Image Credit: Space: Science & Technology.

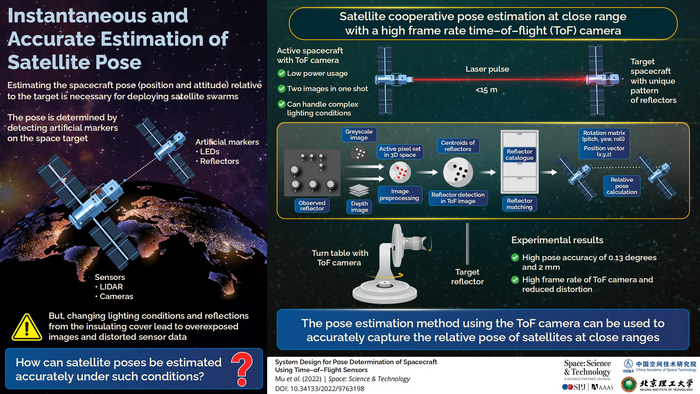

Determining the pose of a spacecraft is critical in so many mission scenarios. Pose determination between nanosatellites and cooperative spacecraft, for example, is critical for swarm in-orbit services. ToF cameras with anti-interference capabilities, automated illumination, and low power consumption are becoming more popular for cooperative target pose estimation.

Shuang Li of Nanjing University of Aeronautics and Astronautics developed the pose determination of cooperative spacecraft using a ToF camera in a research paper recently published in Space: Science & Technology. This implies that a nanosatellite with a ToF camera as the chaser carries relative navigation to the target spacecraft installed with cooperative reflector markers.

First and foremost, the author created an embedded system based on the ToF camera. An embedded ToF module, a power supply system, a cooperative target, and an upper computer comprise the hardware system. To identify the target at a greater distance, the developed ToF camera employs eight 850 nm lasers as the driving light source. Grayscale and color images are captured by the ToF camera.

The embedded server software and upper-computer client application software comprise the ToF system software. The embedded server software works on the embedded ToF’s Linux operating system. It finishes hardware startup, raw depth data processing, and grayscale data processing before receiving and responding to the client. Request and output functions can be transferred using hardware working parameter settings, depth, and grayscale image data.

The operating interface is the integrated server software, and the device starts instantly when powered on. A customized version of QT5.9.4 written in C++ runs the client application software system, which includes drivers for gray and deep image acquisition, processing, target detection, and pose solutions. In operation, the ToF camera alternately feeds grayscale, and depth maps to the upper computer.

The pose determination method generates the pose after acquiring image data. The method is divided into three stages: reflector detection, reflector matching, and pose calculation.

The author then introduced the cooperative reflector extraction method, which consists of three components. Reflector markings on cooperative targets are composed of unique reflective materials that reflect more than the background.

To segment the gray image twice, the first component uses the gray threshold and the filtering intensity threshold. The area around the reflector markers is then designated as the active pixel set. The second component is locating the candidate reflector blobs inside a preprocessed ToF image. Three criteria are used to search through the contiguous blobs of the preprocessed image.

The third component is validating the identified reflector blobs. Although the morphology-based detection method is straightforward, it risks missing detection. Since the marks are designed as circular, the ellipse detection method is used to confirm and supplement when the detected number of marks does not equal the designed number.

In general, ellipse detection consists of three steps: edge segments, line segments, and ellipse. The final step is to compute candidate reflector centroids. The researcher next examined the feature matching approach, which includes the derivation of the matching sets, the deterministic annealing algorithm, and the algorithm implementation.

Furthermore, the pose estimation approach that relies on singular value decomposition was devised after obtaining the feature information.

The researcher then performed tests to confirm the practicality of the ToF camera for rendezvous and docking. A three-axis turntable, a ToF camera, a target, and software comprised the experimental system. First, the detection method's effectiveness was validated using the two groups of reflector markers.

The system’s stability was then verified using two groups of typical distance settings. The pitch and yaw angles were significantly related due to uncoaxial imaging ToF with the turntable, which was one of the main challenges.

The measurement results, however, were impacted by the parallel calibration of the target plane and the ToF. The simulation results show that the couple may be removed and the accuracy and stability of the results are guaranteed by the updated attitude calculation algorithm.

Additionally, the results in the direction of all axis were quite accurate, as seen by the discrepancy between the measured values of the ToF and those of the turntable. According to experimental evaluations, the target identification and posture estimation results were precise and successful, and the original data obtained by the ToF had a limited margin of error.

Furthermore, the author examined two potential future research directions. On the one hand, the self-designed ToF was useful in a narrower range, and its measuring range should be expanded to accommodate more applications. On the other hand, the algorithm only considered a simple structure of reflectors on the target; therefore, the proposed method with a complicated structure and configuration reflectors should be investigated.

Journal Reference:

Zhu, W., et al. (2022) System Design for Pose Determination of Spacecraft Using Time-of-Flight Sensors. Space: Science & Technology. doi.org/10.34133/2022/9763198.