The manufacturing sector is currently facing a number of challenges. Technological change, pressing environmental issues and globalisation require a number of adjustments, such as investing in new technologies, conserving resources and optimising and securing supply chains. Globally operating companies have to face a changing environment and at the same time manage problems in supply chains. Shifting production back to the domestic market is increasingly an option. This requires not only resilience, but also compliance with strict environmental regulations and cost-efficient strategies to make domestic manufacturing competitive. Moreover, those who want to ensure the competitiveness of domestic production must overcome personnel bottlenecks. Automation through robotics has long since become the driving force here, and artificial intelligence (AI) is increasingly taking on a key role. This technology is developing just as rapidly as the pressure for automation is increasing. In order to map production processes in one's own company with AI, the simplest possible AI integration and the shortening of training phases are already decisive factors.

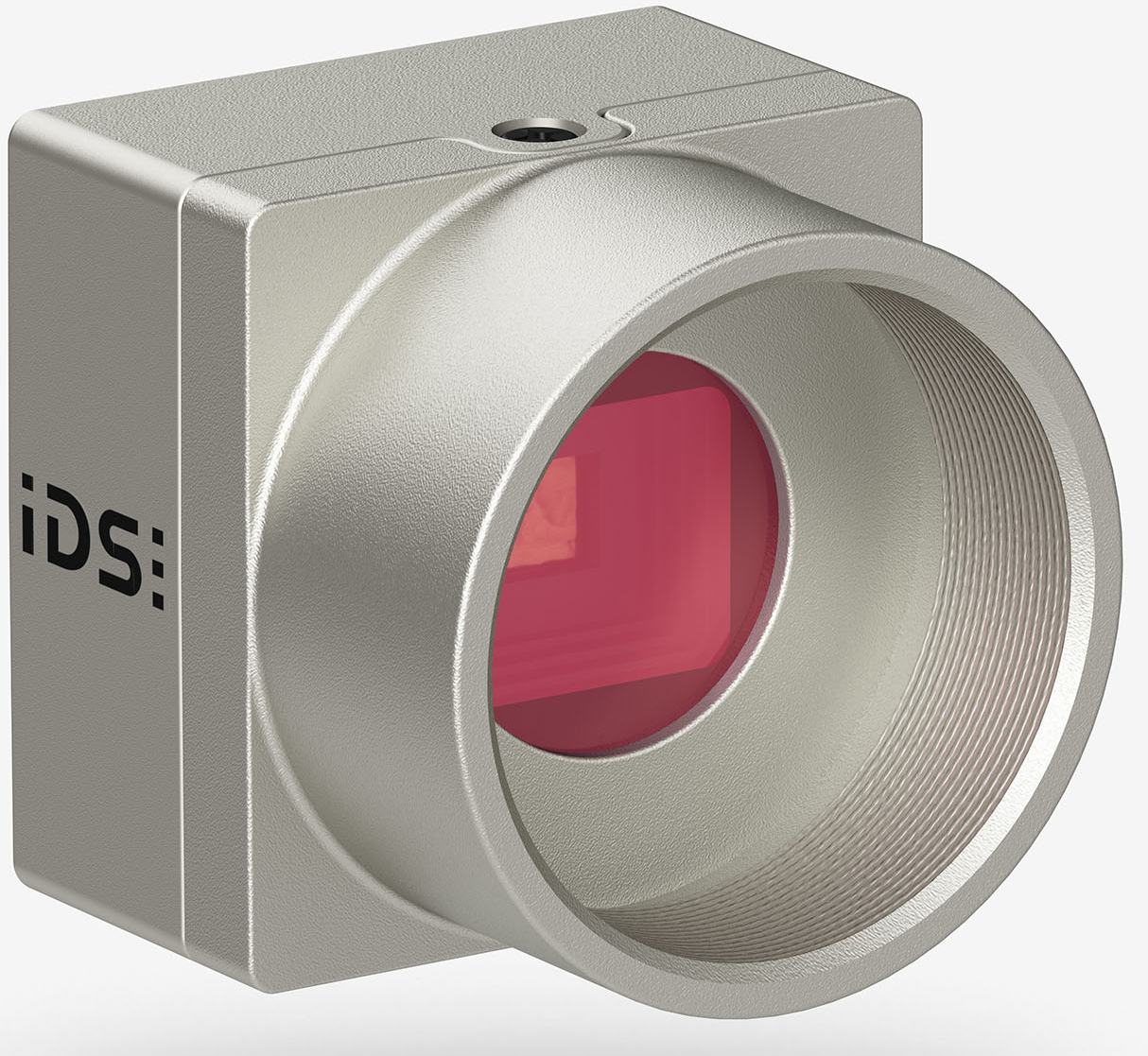

uEye XCP - the industry's smallest housing camera with C-mount. Image Credit: IDS

uEye XCP - the industry's smallest housing camera with C-mount. Image Credit: IDS

This is where British start-up Cambrian Robotics Limited comes in with a fully AI-based solution for various robotics applications in manufacturing. It takes over fast bin picking or pick-and-place, the exact feeding of parts for machines as well as different work steps in material handling - for the benefit of more efficiency in assembly tasks or in warehouse logistics. The easy-to-integrate system consists of a module for robot arms, a computing unit with pre-installed intelligent software and a camera module, each equipped in series with two uEye+ XCP cameras from IDS.

"The task of the cameras is to take a picture of the area with the objects to be handled. Based on the recordings, the software can analyse the scene and recognise where exactly the objects are located," explains Miika Satori, founder and CEO of Cambrian Robotics. Further processing of the images is carried out with the help of the heart of Cambrian Vision - a specially developed, self-learning software for predicting the position of the parts as well as their pick points. This takes care of the image matching on an AI basis, so that no classic 3D point cloud is needed. Based on simulated data, the AI learns independently and locates the removal points and parts extremely precisely. The AI models for part recognition and communication with the robot are controlled by a powerful GPU (Graphics Processing Unit). And the software learns quickly: "With the Cambrian software package, pick points for new parts can be defined and the application configured within just two to five minutes," emphasises start-up founder Satori.

The associated camera module is equipped with two space-saving uEye XCP industrial cameras. "The two IDS cameras provide images of the object scene from different viewing angles according to the stereo vision principle. The challenge is to determine the position of the part to be gripped as accurately as possible from these images. This is again the task of AI," says Miika Satori. The combination of image acquisition, AI models and special image processing makes it possible to determine recording points and positions particularly precisely. "Standard CAD applications for 3D bin picking often use structured light or sensors to do this, projecting something onto the environment, creating a point cloud and then trying to find the part in it. Cambrian uses only two standard IDS industrial cameras instead of a 3D camera.

With an accuracy of less than one millimetre, Cambrian Vision is also much more precise than competing systems. "The system reliably detects a wide range of parts, including shiny, reflective or transparent components, where conventional machine vision systems often reach their limits. At the same time, it remains robust against external light conditions," says Miika Satori, describing the special requirements for the cameras, which are an elementary part of the solution. "It's also super-fast, with an inference speed of less than 170 milliseconds, whereas it often takes more than 1000 milliseconds for comparable solutions." The fast calculation time allows cycle times of two to three seconds in a bin-picking setting. "This ensures efficient, precise and accurate execution in a single pass," Miika Satori underlines. This makes the One-Shot system currently one of the fastest AI image recognition systems on the market.

This is made possible not least by the SuperSpeed USB 5 Gbps cameras, which reliably deliver highresolution data for detailed image evaluations in any environment, explicitly in applications with low ambient light or changing light conditions. Thanks to BSI ("Back Side Illumination") pixel technology, the integrated sensor (1/2.5" 5.04 MPixel rolling shutter CMOS sensor onsemi AR0521) offers stable low-light performance as well as high sensitivity in the NIR (near infrared) range, so that the uEye XCPs deliver high-quality images in almost any lighting situation - with low pixel noise at the same time. With its compact, lightweight full housing (29 x 29 x 17 millimetres, 61 grams) and screwable USB Micro-B connector, the USB3 XCP is particularly suitable for use in combination with robots and cobots in the field of automation.

Thanks to USB3 and Vision Standard compatibility (U3V / GenICam), the XCP cameras can be easily integrated into any image processing system and can basically be used with any suitable software. The simple integration via the standard interface is particularly advantageous for Miika Satori: "Depending on the customer's requirements, we use other IDS cameras in our system. The standardised interface enables rapid deployment of a wide variety of uEye models." Compatible with popular lenses, a wide range of cameras from the IDS portfolio can be used as eyes for custom Cambrian Vision solutions, helping to maximise production performance.

The top speed, the particularly high light insensitivity and the wide component bandwidth that the system achieves thanks to the powerful IDS cameras and intelligent software make it particularly

interesting for automation tasks in the production environment.

Another key to efficiency lies in the uncomplicated integration of Cambrian Vision. The intelligent 3D vision system is ready for immediate use without any real robot training - a remarkable acceleration compared to conventional methods. Companies can therefore quickly reap the benefits of automation: They conserve resources and save costs by operating more efficiently, competitively and sustainably, while improving the quality of their products and the safety of their employees.

Outlook

"The use of AI in robotics is still in its infancy," says Miika Satori. Due to the growing demand, the development in the field of image processing with AI will be further advanced, cameras with higher data rates and faster and larger sensors will come onto the market, as well as further price-optimised models with reliable basic functions. "Industrial cameras are getting smaller and more affordable. This will enable even more applications. Our vision is to give robots capabilities on the same level as humans." By using AIpowered robots for mundane and repetitive tasks, human resources can be redirected to more creative, productive and valuable tasks.

Camera

uEye XCP - the industry's smallest housing camera with C-mount

Model used: U3-3680XCP

Camera family: uEye XCP